Kubernetes Megacluster: Reviewing Cloud Provider Engineering Beyond Scalability Limits

Upstream Kubernetes SIG Scalability has long treated 5,000 nodes as the large-cluster threshold backed by documented scalability goals and guidance12. In practice, though, major cloud providers and companies have been pushing well beyond that mark for years. GKE demonstrated 130K nodes by replacing etcd with Spanner. EKS reached 100K nodes by deeply reworking etcd. ByteDance built its own control-plane stack to manage 20K+ nodes, while OpenAI documented the operational path from 2,500 to 7,500 nodes.

The technical paths are very different, but the objective is the same: keep Kubernetes usable for ever-larger AI and platform workloads.

This article walks through the main bottlenecks, compares the approaches different platforms have taken, and closes with the broader design patterns now emerging at megacluster scale.

mindmap

root((K8s Megacluster<br>Landscape))

Bottlenecks

etcd - Raft/BoltDB

kube-scheduler - serialized path

API Server - lock contention

networking and DNS

Approaches

GKE - Spanner 130K

EKS - etcd offload 100K

AKS - Fleet Manager 5K

ByteDance - KubeWharf 20K+

OpenAI - 2.5K to 7.5K

Alibaba Cloud - large fleet management

Scaling philosophies

single-cluster vertical scaling

multi-cluster horizontal scaling

fully custom control plane

Future directions

standardized etcd alternatives

MultiKueue

DRA

gang scheduling

Why 5,000 Nodes Still Matters

Before comparing vendor strategies, it helps to understand why 5,000 nodes still shows up so often in Kubernetes discussions.

SIG Scalability defines service-level indicators and objectives for large clusters1, including:

- Mutating API latency: for single-object create, update, and delete requests, p99 within 1 second

- Read-only API latency: single-object reads within 1 second, and large cross-namespace or cross-cluster list requests within 30 seconds

- Pod startup latency: from Pod creation until the container reports started, excluding image pulls and init containers, p99 within 5 seconds

These targets are designed around the upstream-tested large-cluster envelope. Once you move materially beyond that scale, the familiar pressure points become more visible: etcd write latency rises, scheduler throughput becomes harder to sustain, and API server informer caches start to contend on shared locks. The Kubernetes large-cluster guidance also explicitly warns that performance can degrade beyond the documented large-cluster target2.

To understand why the vendor strategies diverge so much, it helps to look at those bottlenecks more closely.

Bottleneck Breakdown

flowchart TD

A["Client Request"] --> B["kube-apiserver"]

B --> C["etcd (BoltDB)"]

B --> D["Informer Cache"]

B --> E["kube-scheduler"]

E --> F["Node Assignment"]

F --> G["kubelet"]

G --> H["Pod Running"]

C -.- C1["❌ Raft consensus overhead"]

C -.- C2["❌ Disk I/O bottleneck"]

C -.- C3["❌ Default size limits"]

D -.- D1["❌ RW lock contention<br>PR #132132, #130767"]

E -.- E1["❌ Serialized scheduling path<br>~100-500 pods/sec"]

G -.- G1["❌ Network and DNS issues<br>at 5K+ nodes"]

style C fill:#ff6b6b,color:#fff

style E fill:#ffa94d,color:#fff

style D fill:#ffa94d,color:#fff

style G fill:#ffe066,color:#333

etcd: The Most Direct Bottleneck

etcd stores Kubernetes control-plane state and uses BoltDB as its underlying storage engine. Replication happens through Raft, which protects consistency and durability, but at very large scale it also introduces three familiar problems:

- Raft coordination overhead: every write has to move through consensus, which adds latency and limits throughput

- Disk I/O pressure: write-ahead log (WAL) writes and snapshots become increasingly expensive under heavy churn

- Database growth limits: default etcd size limits and operational overhead become harder to manage as object counts rise

That is why GKE, EKS, and ByteDance all optimze the storage layer first, even though they do so in very different ways.

kube-scheduler: The Ceiling of a Mostly Serialized Scheduling Path

The default scheduler is not designed around extreme levels of parallel pod placement. When a workload needs to place thousands of Pods quickly, especially for distributed AI training, scheduler throughput becomes a real limiter. ByteDance’s Gödel scheduler reports throughput around 5,000 pods/sec3, far above the rough order of magnitude most operators associate with the default upstream path.

API Server: Informer Cache Lock Contention

At extreme scale, the API server and controller path can hit lock contention in informer caches. During EKS ultra-scale testing, AWS identified and upstreamed fixes for two important cases:

- PR #1321324: reduced informer-cache RW lock contention that delayed event handling in controller paths

- PR #1307675: addressed informer-cache lock contention affecting API server and controller-manager behavior

Those fixes benefit the broader Kubernetes ecosystem, not just EKS. In addition, strongly consistent reads from cache introduced in Kubernetes v1.31 reduce direct etcd read pressure by serving more consistent reads from local cache.

Networking and DNS

OpenAI’s published experience scaling from 2,500 to 7,500 nodes shows that networking issues often surface before the control plane fully falls over67:

- Flannel throughput limits: OpenAI reported roughly ~2 Gbit/s between Pods before moving to Azure native CNI

- KubeDNS hotspot behavior: scheduling concentration and upstream DNS limits created painful hotspots

- ARP cache overflow: very large Pod-IP counts could overflow neighbor tables and disrupt node-to-node communication

At this scale, “small” networking issues stop being small. They become cluster-wide failure modes.

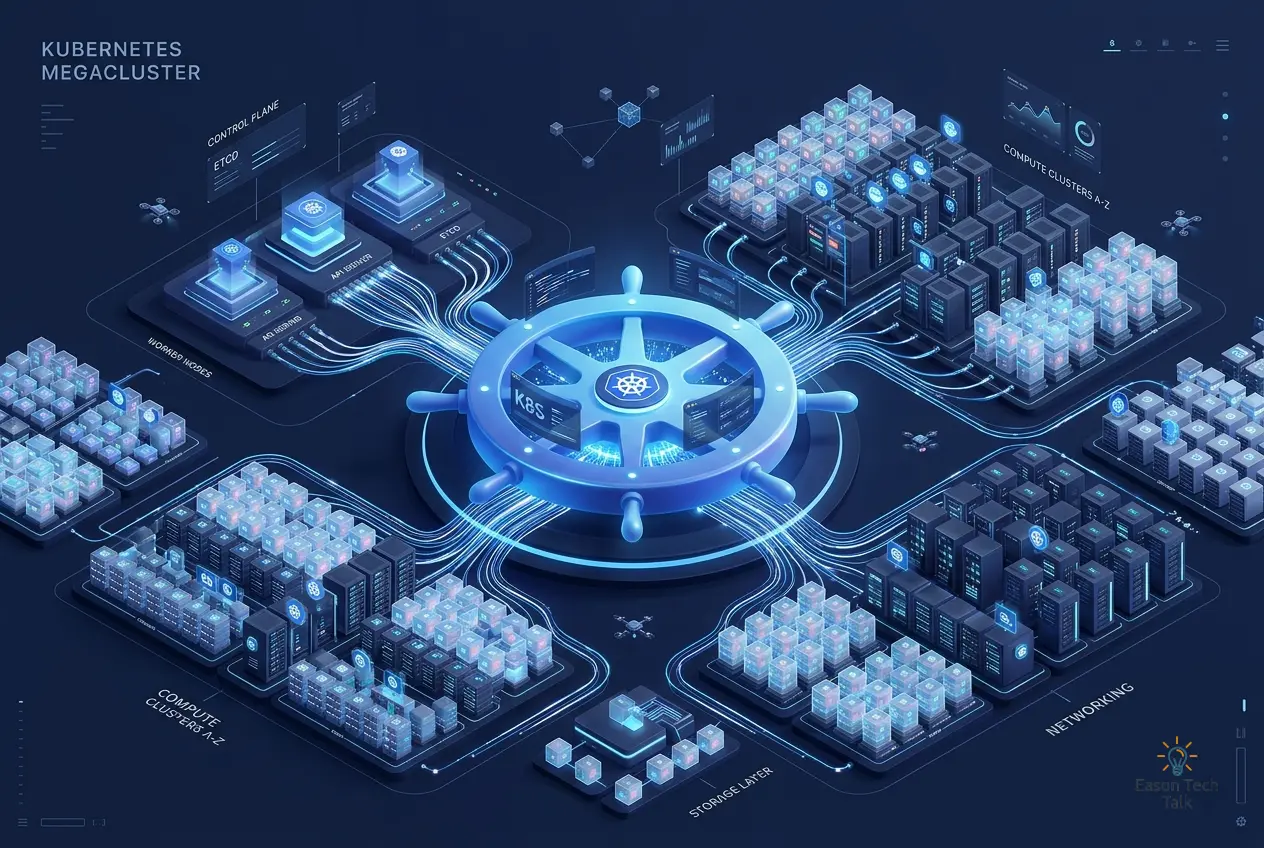

Platform Strategies

At a high level, the market has converged on three responses to the same scaling problem:

- re-architect the single-cluster control plane

- scale out across multiple clusters

- build a more customized platform stack end to end

The platform examples below map cleanly onto those patterns.

GKE: Replace etcd with Spanner

Google’s approach is the cleanest architectural break from upstream assumptions: replace etcd with Spanner.

Spanner gives GKE a distributed storage backend designed for horizontal scale and externally consistent transactions. That avoids etcd’s classic single-system bottlenecks and lets GKE scale from a fundamentally different starting point. According to Google’s published benchmark8, GKE reached:

- 130,000 nodes in a demonstration environment

- official support for 65K nodes on GKE v1.31+

- around 1,000 pods/sec of scheduling throughput

- 1M+ Kubernetes objects

Architecturally, it is an elegant solution. The tradeoff is just as clear: it is deeply coupled to Google Cloud infrastructure and much farther from upstream Kubernetes assumptions than a standard etcd-based control plane.

In practice, Google also says customers are already running 20K to 65K-node clusters for large TPU and GPU training environments8.

EKS: Reworking etcd at Scale

AWS chose the opposite route. Instead of replacing etcd, it kept etcd and heavily modified the implementation around it while preserving Kubernetes conformance expectations9:

- Consensus offload: Raft coordination is offloaded into an AWS journal layer, reducing etcd’s own consensus burden

- Key-space partitioning: partitions increase scale and push supported object counts past 10M

- BoltDB moved to tmpfs: once durability is handled elsewhere, moving BoltDB off EBS and into memory-backed storage cuts latency substantially

EKS reached the milestone of 100K nodes in GA10, with AWS positioning the platform for very large AI environments, including public references to 1.6M Trainium chips or 800K GPUs in a single cluster-scale design point.

AWS also published unusually detailed operational data. In its test environment:

- nodes joined at roughly 2,000 nodes per minute

- the full 100K-node cluster came up in about 50 minutes

- around 70K nodes ran fine-tuning workloads

- around 30K nodes ran large inference workloads through LeaderWorkerSet

The environment was also highly tuned, with Trn2 and P-series instances, high EBS throughput, and SOCI Snapshotter baked into the AMI to reduce image-pull pressure. That makes the result impressive, but still somewhat idealized: it is not a generic production baseline, it says less about long-lived mixed-workload pressure, and it does nothing to soften the economics of operating clusters at this scale.

AKS: Scale Out with Fleet

Microsoft’s AKS strategy is notably more pragmatic. Microsoft documents 5,000 nodes / 200,000 Pods as the upper end of large single-cluster guidance for AKS11, then uses Fleet Manager to scale across clusters rather than continuing to stretch one cluster indefinitely12.

That approach has clear advantages: no need to rewrite core Kubernetes components, each cluster stays inside a better-understood operating envelope, and fault isolation is stronger across clusters. The drawback is that some distributed AI training patterns want one large, flat cluster network with direct peer-to-peer communication for collective operations such as all-reduce. That is one reason GKE and EKS keep pushing hard on single-cluster scale.

ByteDance: Build a Custom Control Plane

ByteDance took the most customized route of all through the KubeWharf ecosystem13:

- KubeBrain replaces etcd, backed by TiKV or byteKV, and is reported to support 20K+ nodes

- Gödel scheduler provides parallel scheduling and reports up to 5,000 pods/sec3

- Katalyst focuses on resource management and utilization

- KubeAdmiral adds multi-cluster orchestration and is described as managing very large service and Pod fleets14

This is the highest-control and highest-maintenance model. It can work for companies at TikTok scale, but it requires a serious platform engineering organization and gives up much of the benefit of staying close to upstream improvements.

OpenAI: Scaling on Azure

OpenAI’s experience is especially useful for teams that are not replacing the control plane and are not building a proprietary scheduler stack. Its published scaling journey happened in two stages:

- 2,500 nodes6: control-plane and networking weaknesses started to show up clearly.

- 7,500 nodes7: networking became the bigger story, including migration from Flannel to Azure native CNI to improve throughput and support much larger Pod-IP routing scale.

OpenAI also described the API-server cost of cluster-wide WATCH patterns, especially with large Endpoints objects. Moving to EndpointSlices reduced that burden dramatically, and the team also had to deal with Prometheus memory pressure at cluster scale. The big lesson is straightforward: before you ever hit 10K nodes, networking, DNS, and observability may hurt you first.

Alibaba Cloud: Fleet Scale

Alibaba Cloud highlights a different dimension of scale15: managing very large fleets of Kubernetes clusters, rather than only maximizing the size of one individual cluster. That reflects a different business need: multi-tenant fleet operations across many clusters instead of a small number of giant AI clusters.

Megacluster Comparison

| Dimension | GKE | EKS | AKS |

|---|---|---|---|

| Publicly discussed maximum scale | 130,000 nodes (demo) | 100,000 nodes (GA) | 5,000 nodes (documented single-cluster limit) |

| Production scale mentioned publicly | 20K-65K customer clusters | customer environments in the tens of thousands of nodes | scale-out via Fleet rather than beyond 5K in one cluster |

| etcd replacement strategy | ✅ Spanner | ✅ etcd retained but deeply reworked | ❌ etcd retained |

| Pod scheduling throughput | ~1,000 pods/sec | ~500 pods/sec | not publicly emphasized |

| Ultra-scale strategy | single-cluster vertical scaling | single-cluster vertical scaling | multi-cluster horizontal scaling |

| Primary AI accelerator focus | TPU + GPU | Trainium + GPU | GPU on Azure infrastructure |

Three Scaling Models

mindmap

root((Beyond 5K Nodes<br>Three Strategies))

1. Single-cluster scaling

GKE

Replace etcd with Spanner

Demonstrated 130K nodes

~1,000 Pods/sec

EKS

Offload etcd consensus

100K nodes GA

Large accelerator counts in one cluster

2. Multi-cluster federation

Azure AKS Fleet Manager

5K-node single-cluster target

cross-cluster workload placement

stronger fault isolation

Alibaba Cloud

fleet-wide cluster operations

multi-tenant architecture

3. Fully custom control plane

ByteDance KubeWharf

KubeBrain instead of etcd

Gödel at 5,000 pods/sec

KubeAdmiral for large fleets

Stepping back from the platform-by-platform details, the strategies fall into three broad philosophies:

1. Single-Cluster Vertical Scaling: GKE and EKS

This model modifies the control plane so one cluster can carry dramatically more nodes. It fits large distributed AI training jobs that want one scheduler domain and one network domain. The cost is that the control plane becomes more specialized, and the blast radius of one cluster grows with it.

2. Multi-Cluster Horizontal Scaling: AKS Fleet and Similar Models

This model keeps each cluster within a safer operating envelope and adds a higher-level fleet layer for scheduling and management. It is a better fit for microservices, regional isolation, and failure-domain separation. The downside is that it does not map as neatly onto tightly coupled training jobs that expect direct full-cluster communication.

3. Fully Custom Control Planes: ByteDance

This model removes most upstream constraints by rebuilding the stack. It is only realistic for organizations with very large platform teams and strong reasons to own the full control-plane path.

Shared Patterns

Even though the implementations differ, several patterns are becoming clear.

Some kind of etcd workaround is now common at extreme scale. GKE replaces it with Spanner, EKS retains it but offloads consensus and restructures storage, and ByteDance replaces it through KubeBrain8913. Once clusters push far past the upstream-tested range, control-plane storage almost always becomes the first thing people redesign.

Scheduling is getting richer across clusters and inside them. MultiKueue offers a way to combine multi-cluster isolation with job-level queueing and placement across clusters, which is especially relevant for Fleet-style architectures. At the same time, gang scheduling is moving from “nice to have” to “table stakes” for large AI clusters, where partial placement wastes expensive accelerator capacity.

DRA (Dynamic Resource Allocation) becomes more important as accelerator counts explode. At this scale, device plugins are often too coarse. AI workloads increasingly need topology-aware placement, such as multiple GPUs on the same host with the right NVLink or PCIe relationships.

Conclusion

The technical choices across these platforms reflect different product goals and different engineering tradeoffs:

- GKE takes the cleanest architectural break by replacing etcd with Spanner, and currently shows the highest published single-cluster ceiling.

- EKS stays closer to upstream assumptions while heavily reworking the internals around etcd, making it attractive for organizations that want both scale and stronger Kubernetes conformance alignment.

- AKS takes the pragmatic multi-cluster route, which is often the more operationally conservative choice.

- ByteDance proves that a fully custom control plane can push scale much further, if you are willing to own the platform engineering cost.

For most teams, divide and conquer is still the safer default. But as AI training continues to demand larger pools of tightly coordinated accelerators, the practical ceiling of a single Kubernetes cluster will keep rising.

The next wave of progress will likely center on more standardized storage alternatives to etcd, better cross-cluster scheduling through MultiKueue, richer accelerator-aware scheduling with DRA, and more mature gang-scheduling semantics. The harder question will not be whether Kubernetes can reach these scales in a benchmark, but whether teams can operate them sustainably in production.

References

-

5,000 pods/second and 60% utilization with Godel and Katalyst (KubeFM) ↩ ↩2

-

How we built a 130,000-node GKE cluster (Google Cloud Blog) ↩ ↩2 ↩3

-

Amazon EKS enables ultra scale AI/ML workloads with support for 100K nodes per cluster ↩

-

Performance and scaling best practices for large workloads in AKS ↩

-

Limitless Kubernetes Scaling for AI and Data-intensive Workloads: The AKS Fleet Strategy ↩

-

KubeAdmiral: next-generation multi-cluster orchestration engine (CNCF) ↩