A Kueue Test Cleanup That Explains TAS Rank and Greedy Assignment

When running distributed GPU or TPU training, we usually want the Pods in the same workload placed on machines that are physically close to each other, such as within the same rack or data center block. The closer the nodes are, the lower the network latency, and the better the training efficiency tends to be. Kueue1 addresses this with Topology Aware Scheduling (TAS): when a workload is admitted, it calculates a placement plan ahead of time that says where each Pod should go based on topology.

But there is an easy-to-miss problem here: that placement plan is computed before the workload actually starts running. By the time the Pods are created or replaced, the real cluster state may already have changed. A node may fail, a Pod may be evicted, or a machine may be replaced. At that point, the original plan can drift away from reality. This is one of the most subtle but important parts of TAS.

While contributing to Kueue recently, I submitted a small test cleanup PR, #97962. On the surface, it only removed unnecessary JobSet-specific code from a test. But while cleaning it up, I realized that the test is a very good way to explain three core TAS concepts:

- Rank: a stable numeric identity for a Pod within a workload

- TopologyAssignment: the placement plan computed before the workload starts

- Greedy Assignment: the fallback mechanism used when the original plan no longer matches runtime reality

This article walks through that test case step by step to build an intuitive understanding of how Kueue TAS works.

A Quick Overview of Kueue Topology Aware Scheduling

Kueue Topology Aware Scheduling (TAS) is an advanced scheduling feature. It does not only care about how many CPUs, GPUs, or TPUs a workload needs. It also cares about where those Pods should be placed in the topology hierarchy.

According to the Kueue documentation3, TAS uses node topology labels to represent hierarchical structure inside a data center, for example:

cloud.provider.com/topology-block: a data center blockcloud.provider.com/topology-rack: a rackkubernetes.io/hostname: an individual host at the lowest level

During workload admission, Kueue uses these topology labels to compute a TopologyAssignment. You can think of it as a placement plan that maps each Pod to a specific topology domain.

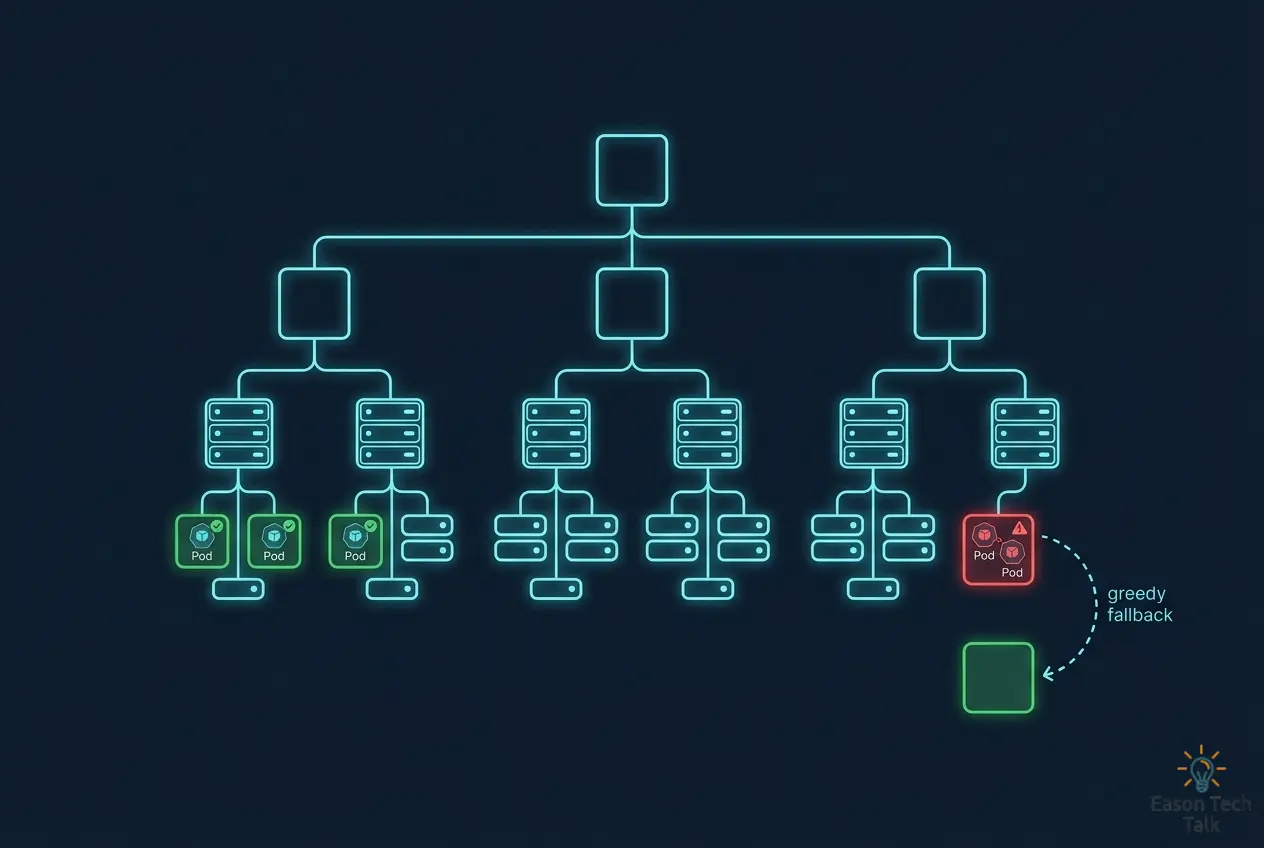

The Topology Tree

Kueue combines ResourceFlavors, topology configuration, and Nodes to organize cluster nodes into a topology tree. Each node in that tree is a topology domain, and each level represents a physical or logical boundary. A typical four-level topology looks like this:

Each level maps to a Kubernetes label:

| Topology Level | Topology Label | Description |

|---|---|---|

| Zone | cloud.provider.com/topology-zone |

Availability zone, the highest level |

| Block | cloud.provider.com/topology-block |

A block within the data center |

| Rack | cloud.provider.com/topology-rack |

Rack |

| Node | kubernetes.io/hostname |

Hostname, the lowest level |

When placing Pods, TAS uses the workload’s requiredTopologyLevel to keep the group as compact as possible. For example, if the required level is rack, TAS first tries to place all Pods in the same rack. If one rack does not have enough capacity, it expands placement upward to a larger topology domain such as multiple racks in the same block.

Rank: A Stable Pod Identity Within the Workload

In TAS, the word “rank” is easy to misunderstand. It does not mean priority, and it is not the distributed-training rank used by NCCL or MPI. Here, rank simply means:

- A stable numeric identity assigned to each Pod within the same workload.

For an Indexed Job, Kubernetes assigns each Pod an index from 0 to .spec.completions - 1 through batch.kubernetes.io/job-completion-index, and exposes it as a Pod annotation and label4. For example:

workload

└─ Indexed Job

├─ pod A (job-0) -> rank 0

└─ pod B (job-1) -> rank 1

TopologyAssignment: The Plan Computed at Admission Time

Once Kueue finishes admission, it produces a TopologyAssignment that maps each rank to a specific topology domain. Suppose the required topology level is kubernetes.io/hostname. A workload with two Pods might produce this assignment:

| Rank | Pod | Assigned Node |

|---|---|---|

| 0 | Pod A | x3 |

| 1 | Pod B | x1 |

This assignment is fundamentally a plan. It is correct at the moment it is computed, but runtime events such as node failures or Pod eviction can make the actual cluster state diverge from that plan.

The Problem Scenario: Replacement Pod vs Running Pod

Where the Problem Comes From

This issue was originally reported in Issue #92105. The scenario looks like this:

Imagine a workload that is already running. During admission, Kueue computed a placement plan saying which node should host each Pod. Later, one of the nodes fails and needs replacement. At that moment, the runtime state becomes:

- Pod A: it was running on the failed node and is now terminating

- Pod A’s replacement: a new replacement Pod has already been created and is waiting to be ungated

- Pod B: it has continued running elsewhere and was not directly affected

The problem appears when the controller decides where to place Pod A’s replacement. If it trusts only the original TopologyAssignment, without first checking which Pods are actually running on which nodes right now, it can place the replacement onto a node that is already occupied by another running Pod. That causes two Pods to collide on the same node, violating the intended TAS placement constraints.

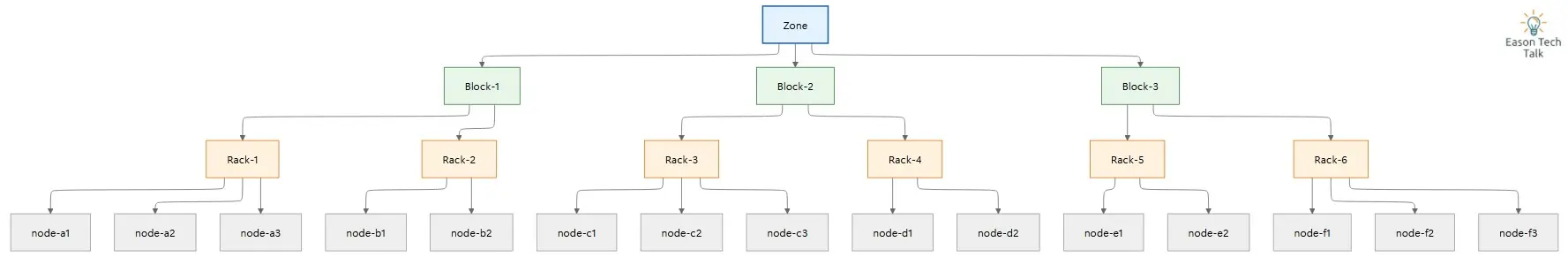

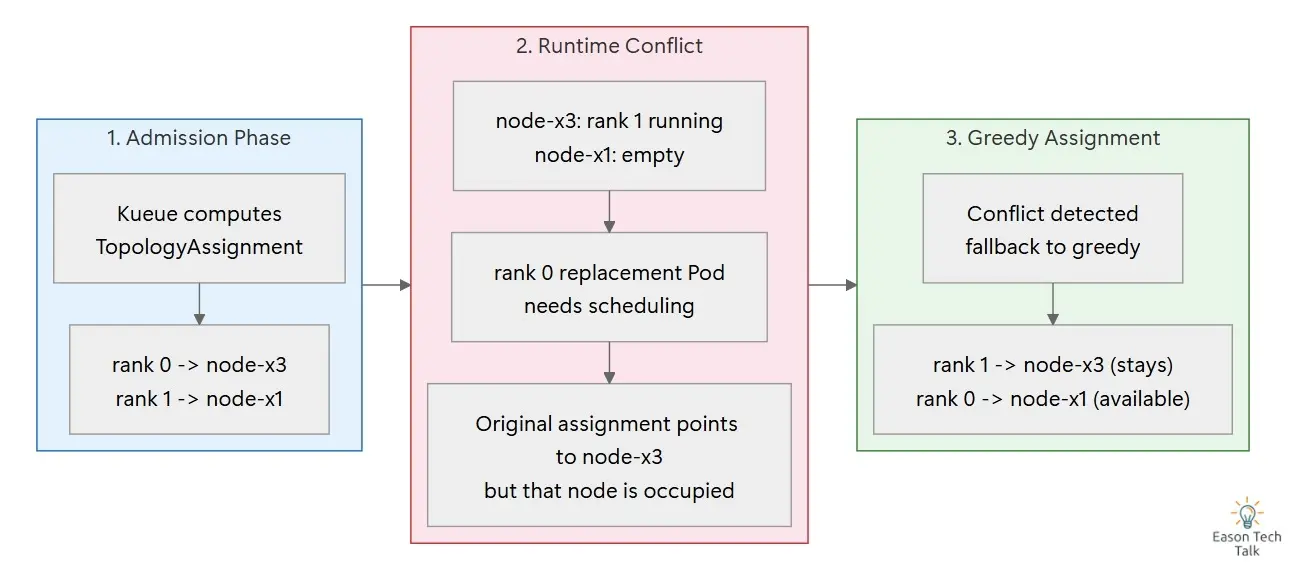

The diagram below shows the three phases of this scenario:

The three phases are:

- Step 1: after admission, Pod A and Pod B are each running on their assigned nodes

- Step 2: later,

node-x3fails. Pod A is terminating, Pod B has ended up running onnode-x3, andnode-x1is now empty. At the same time, a new replacement Pod is waiting to be ungated - Step 3: if the controller rigidly follows the old assignment (

rank 0 -> node-x3), it will also place the replacement Pod onnode-x3, creating a collision with the already running Pod

How the Test Reproduces It

The test named should fallback to greedy assignment when replacement pod conflicts with already running pod reproduces this situation directly2.

The original TopologyAssignment is:

rank 0 -> node-x3

rank 1 -> node-x1

But the test intentionally constructs a runtime state that no longer matches the original assignment:

node-x3: rank 1 (running)

node-x1: empty

In other words, the rank 1 Pod is already running on node-x3, which was originally planned for rank 0. If the controller still tries to place the replacement Pod for rank 0 onto node-x3, it will conflict with the running rank 1 Pod.

Expected placement from the original Kueue plan

┌────────┬────────────┬──────────────┐

│ rank │ pod │ node │

├────────┼────────────┼──────────────┤

│ 0 │ p0 │ x3 │

│ 1 │ p1 │ x1 │

└────────┴────────────┴──────────────┘

Actual runtime state (p1-running has ended up on x3)

┌────────┬──────────────┬──────────────┐

│ rank │ pod │ node │

├────────┼──────────────┼──────────────┤

│ 1 │ p1-running │ x3 │

└────────┴──────────────┴──────────────┘

Greedy assignment result (new rank 0 Pod goes to x1)

┌────────┬────────────────┬──────────────┐

│ rank │ pod │ node │

├────────┼────────────────┼──────────────┤

│ 1 │ p1-running │ x3 │

│ 0 │ p0-replacement │ x1 │

└────────┴────────────────┴──────────────┘

This is not a fabricated edge case. It reflects a real production risk: the topology assignment computed at admission time is a plan, but runtime state may no longer match that plan.

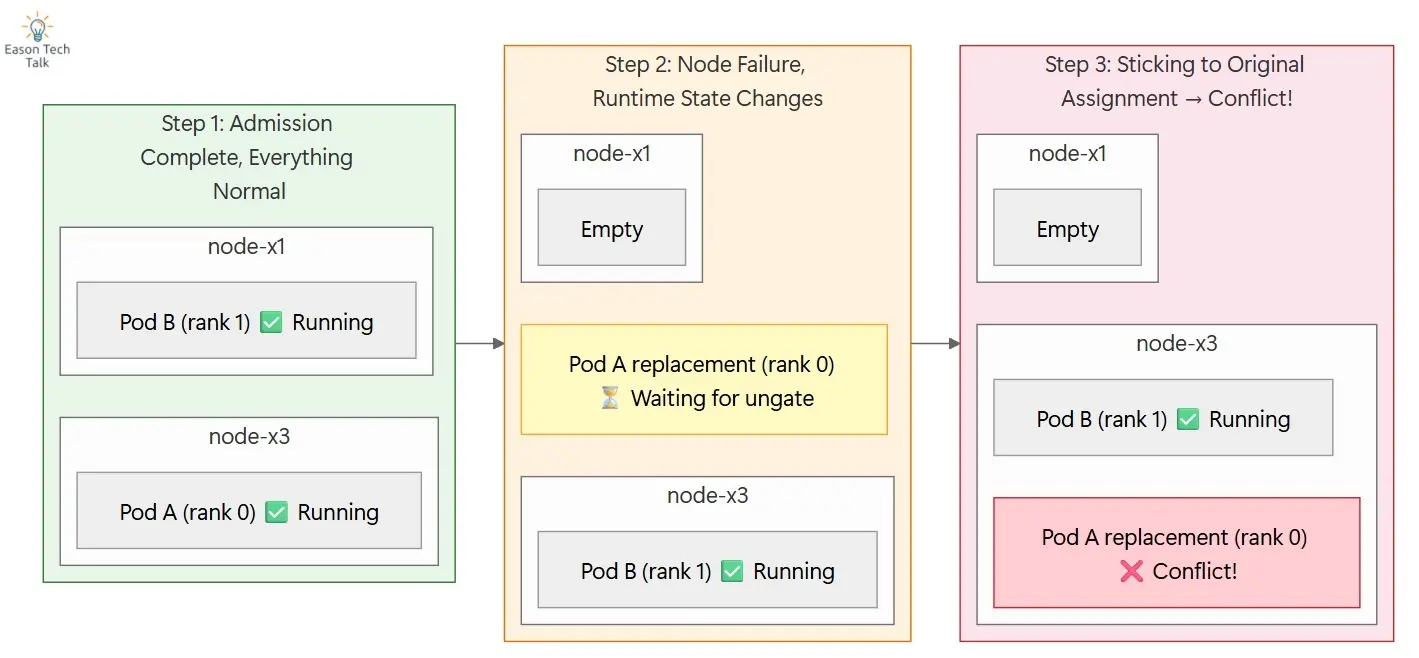

Greedy Assignment: The Safety Fallback

When the original placement plan no longer matches reality, the TAS topology ungater does not blindly force the old mapping. Instead, it falls back to greedy assignment, a mechanism that recomputes placement based on the current runtime state.

Greedy assignment is not a crude “put it anywhere” strategy. It follows clear design goals:

- Avoid collisions: never place a replacement Pod onto a node that already hosts another running Pod from the workload

- Check live runtime state first: if the old assignment is stale, pick placement based on what is actually free now

- Protect system correctness: it is better to choose a different valid location than to force an outdated mapping and break placement correctness

TopologyAssignment and Greedy Fallback Flow

The following diagram shows the full TAS flow from admission-time planning to runtime fallback:

It illustrates two key ideas:

- Topology Tree: Kueue organizes cluster nodes into a Zone -> Block -> Rack -> Node hierarchy using topology labels

- Admission -> Runtime -> Greedy Fallback: when the admission-time plan and runtime state diverge, the controller falls back to greedy assignment to preserve correct placement

The Correct Final Placement

In this test case, greedy assignment produces the following final result:

p1-running (rank 1) -> node-x3

p0-replacement (rank 0) -> node-x1

The key point is not that rank 0 must remain on node-x3 at all costs. The key point is that the controller must not force an outdated rank-to-node mapping and end up placing two Pods on the same node.

From that perspective, greedy assignment is not a downgrade. It is a safety net that protects placement correctness when rank-based assignment has drifted away from runtime reality.

Why the Test Was Cleaned Up from JobSet to Job

What Was Wrong with the Original Test?

The original test used some JobSet-specific subgroup labels. But what the test actually verifies is much simpler: when the node corresponding to a Pod’s rank no longer matches runtime reality, does TAS correctly fall back to greedy assignment?

That rank identity can already be expressed with the native Kubernetes Indexed Job label batch.kubernetes.io/job-completion-index. JobSet is not required for this scenario.

There is also a practical reason: the integration test environment typically does not install the JobSet CRD, as described in Issue #92846. Leaving JobSet-specific details in the test makes readers think the test is validating JobSet integration behavior, when in fact the core logic is independent of JobSet.

What PR #9796 Changed

PR #97962 made a small but meaningful cleanup:

- removed JobSet-specific labels and configuration from the test

- used

batch.kubernetes.io/job-completion-indexto represent Pod rank - made the test purely about native Job semantics, with no unnecessary JobSet coupling

The result is a clearer test: when rank-based placement conflicts with actual runtime state, can TAS correctly switch to greedy assignment? After the cleanup, the test expresses exactly that and nothing more.

Conclusion

This test cleanup is a good example of the balance between abstraction and fault tolerance in Kueue TAS. TopologyAssignment gives TAS an ideal placement plan, while greedy assignment provides the runtime safety mechanism that handles real-world drift.

The hard part of distributed scheduling is not computing a perfect plan once. It is preserving correctness when production conditions inevitably change. TAS handles that by separating planned placement from runtime fallback.

I hope this article makes the TAS design easier to understand, especially how rank, TopologyAssignment, and greedy assignment fit together, and why simplifying the test helps validate the real core behavior more clearly.

References

-

Kueue PR #9796 — cleanup: use Job instead of JobSet in TAS rank ordering case ↩ ↩2 ↩3

-

Kubernetes documentation — Jobs and Indexed completion mode ↩

-

Kueue Issue #9210 — Wrong pod placement when node replacement is performed ↩

-

Kueue Issue #9284 — Simplify test case for rank-based ordering to use Job rather than JobSet ↩